Spaces

In Experiment 5, we explored how voice commands and body rhythms could manipulate digital environments to simulate the experience of lucid dreaming. Inspired by films like Inception and Ant-Man, we used speech recognition to alter scanned 3D spaces, dynamically distorting familiar environments like classrooms and bedrooms.

Tools: Polycam, Scanniverse, TouchDesigner, P5.js

By integrating subtle body rhythms such as pulse, breath, and blink, we reflected the subconscious movements in dreams, creating immersive, surreal transformations. This experiment highlighted the connection between sound, control, and visual manipulation, offering insight into how abstract data can drive interactive experiences and reshape digital landscapes in real-time.

Changing Familiar Environment

Bedroom

-

Room

-

Scatter

-

Swell

Design Studio

-

Split

-

Distort

-

Surround

-

Studio

Speech Recognition

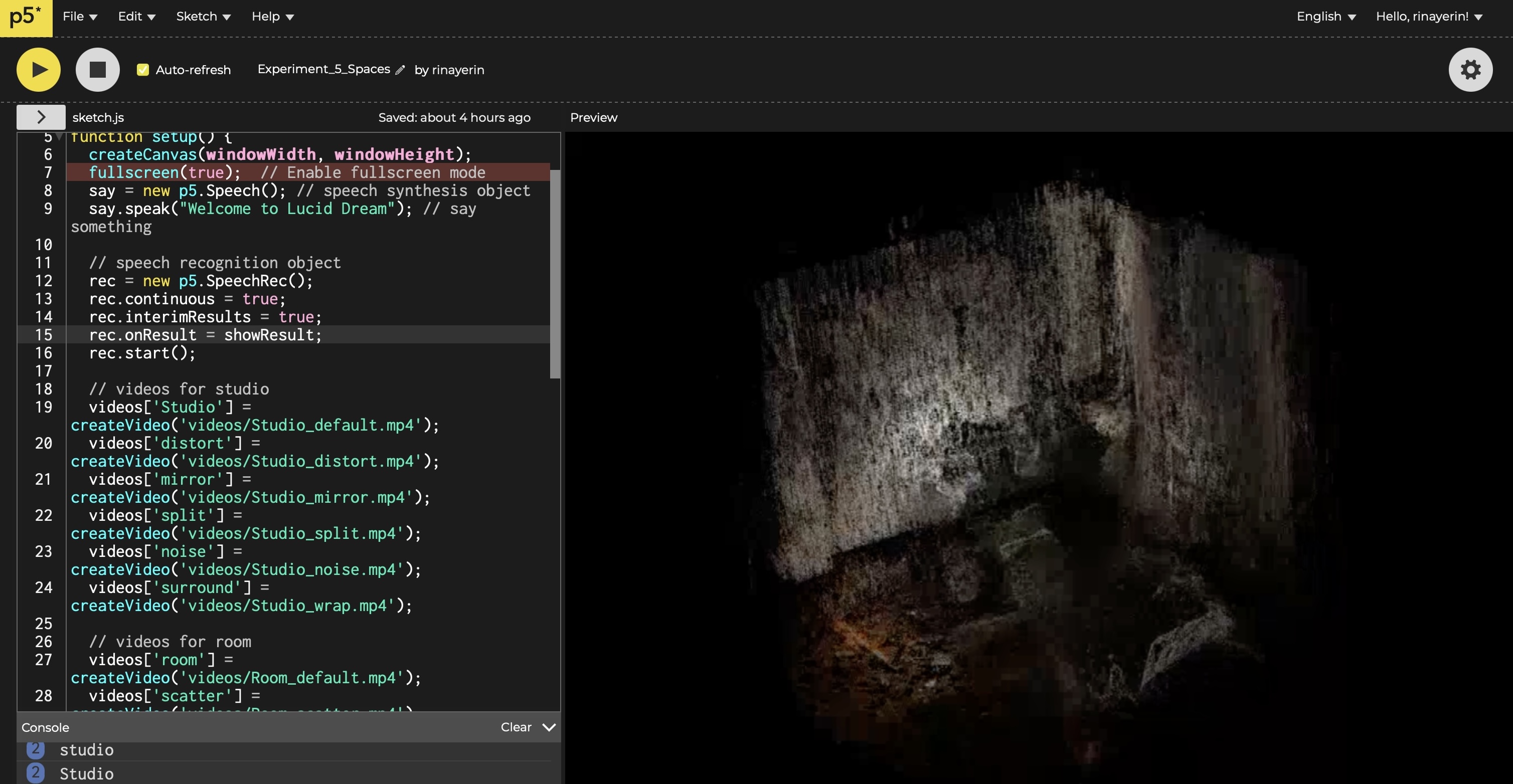

We used speech recognition to capture the user's voice, triggering corresponding visual transformations. Commands such as “distort,” “mirror,” or “scatter” would modify the visual spaces, which were originally scanned 3D environments like classrooms and bedrooms. These visuals, exported from TouchDesigner, were integrated into p5.js and played as videos that changed based on the spoken commands. This interaction gave users the feeling of reshaping their surroundings in real time, much like controlling the landscapes in a dream.

-

Visual Sound Score

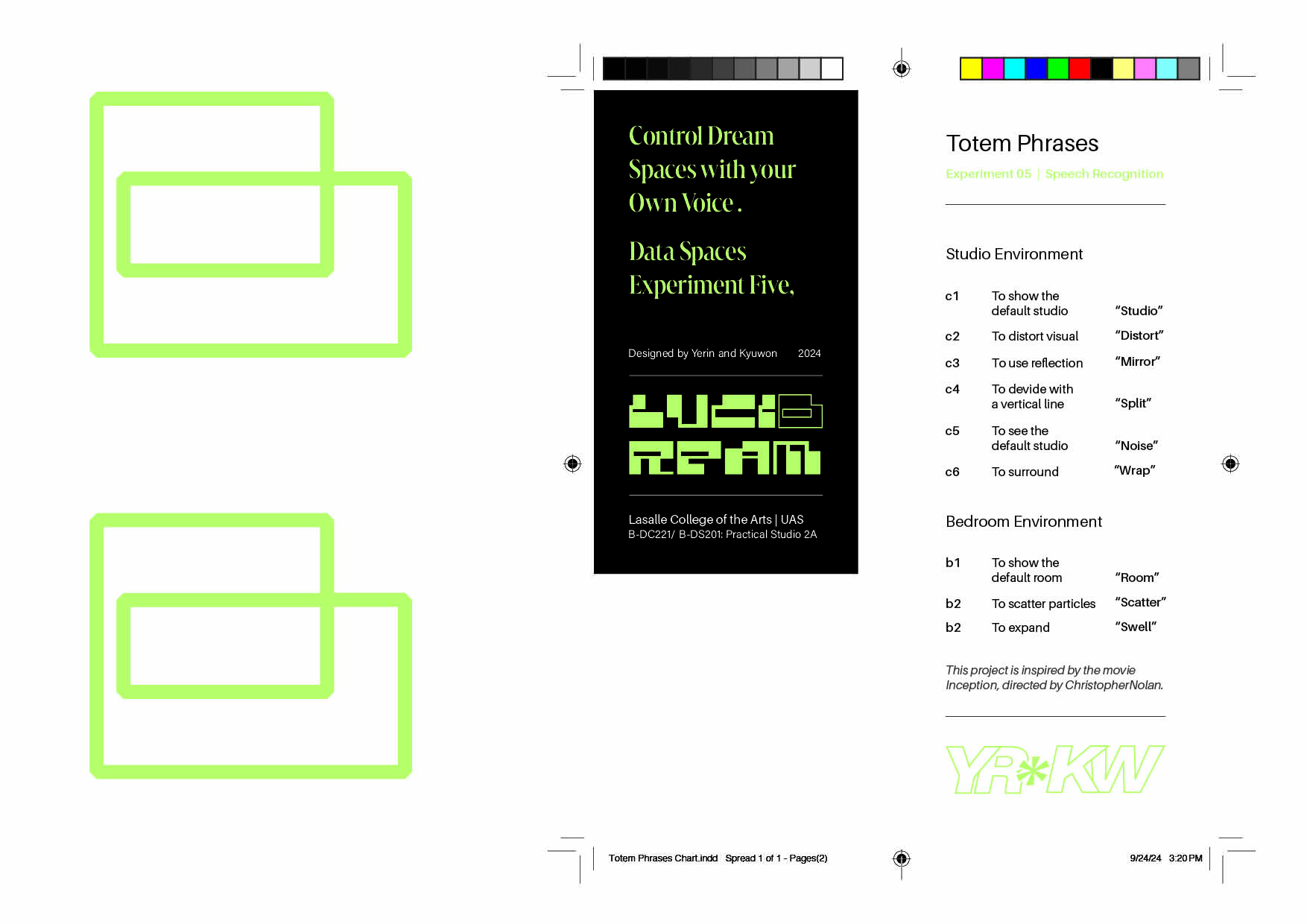

Expressing data as a narrativeWe designed a Totem Phrases Chart to represent the corresponding actions triggered by voice commands. This score maps the totem words to their visual changes and serves as a guide for interaction.

-

-

Tools: Adobe Illustrator, Indesign.

Working Process

-

Ideation

Lucid Dream and Body Rhythms

At the heart of our experiment was the concept of transitioning from reality into a dreamscape. We imagined what it would be like to shrink into an abstract version of familiar spaces, inspired by the Quantum Realm from Marvel's Ant-Man. This idea of moving into smaller, surreal worlds while sleeping influenced how we manipulated the scanned environments.

We also integrated body rhythms—pulse, breath, and blink—into the visuals, using them as subconscious drivers for the dream.

- Pulse : Influenced the expansion or contraction of the 3D space, as if the dream environment was "breathing" in time with the user's heartbeat.

- Breath : Added smooth, wave-like distortions to the visuals, symbolizing the ebb and flow of respiration.

- Blink : Created quick, sudden changes in the visuals, mimicking the brief shifts in focus that occur when one blinks.

Visual Creation

- -Scanniverse Polycam

- - TouchDesigner

- - P5.js_Audio

- 3D Scanner

Scanniverse, Polycam

- Using mobile apps Polycam and Scanniverse, we scanned physical spaces (classroom, my room). This allowed us to bring real-world environments into a digital space, capturing details in a way that felt natural yet surreal when manipulated.

- * Initially, the scans weren’t working well due to lighting and surface reflection problems, but switching to scanning more structured spaces, like rooms, improved the output.

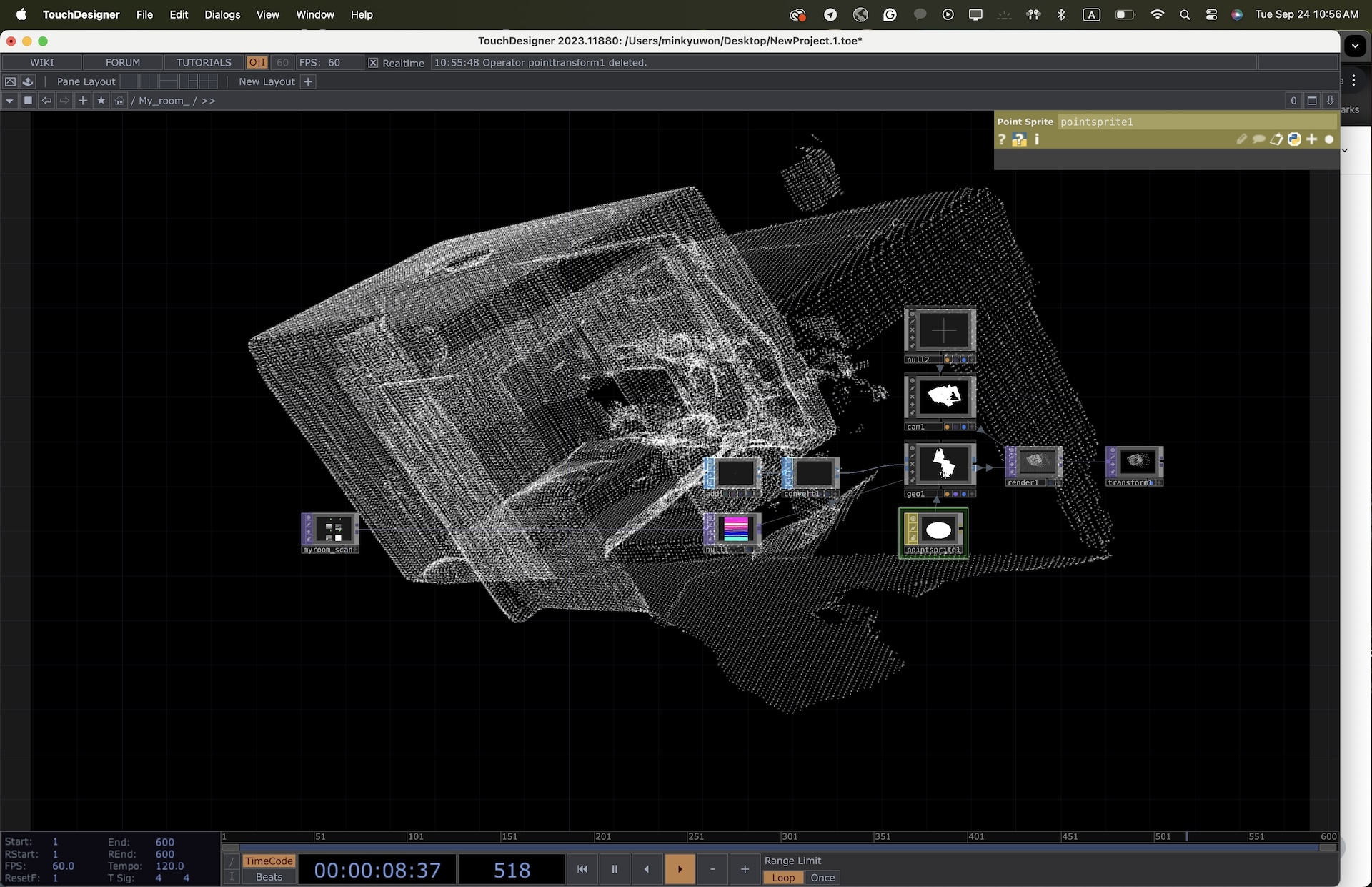

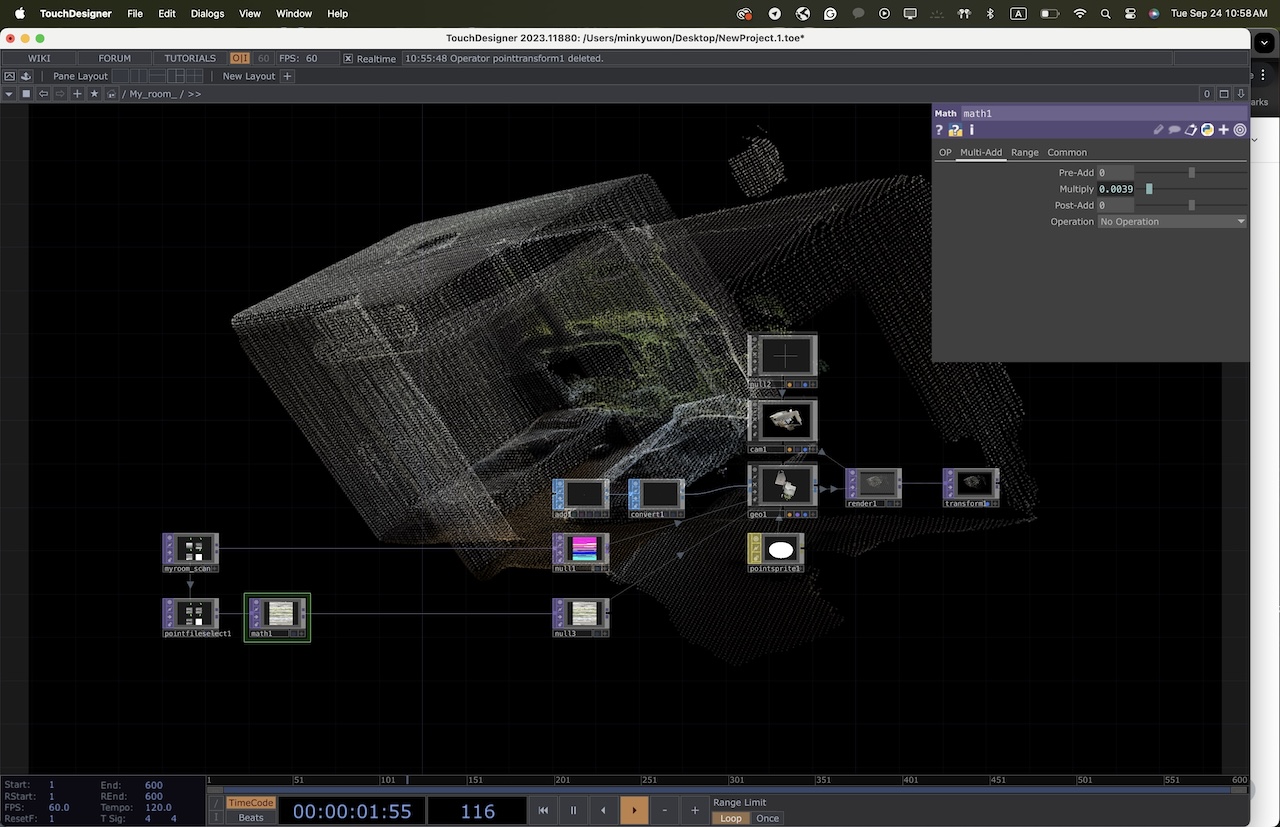

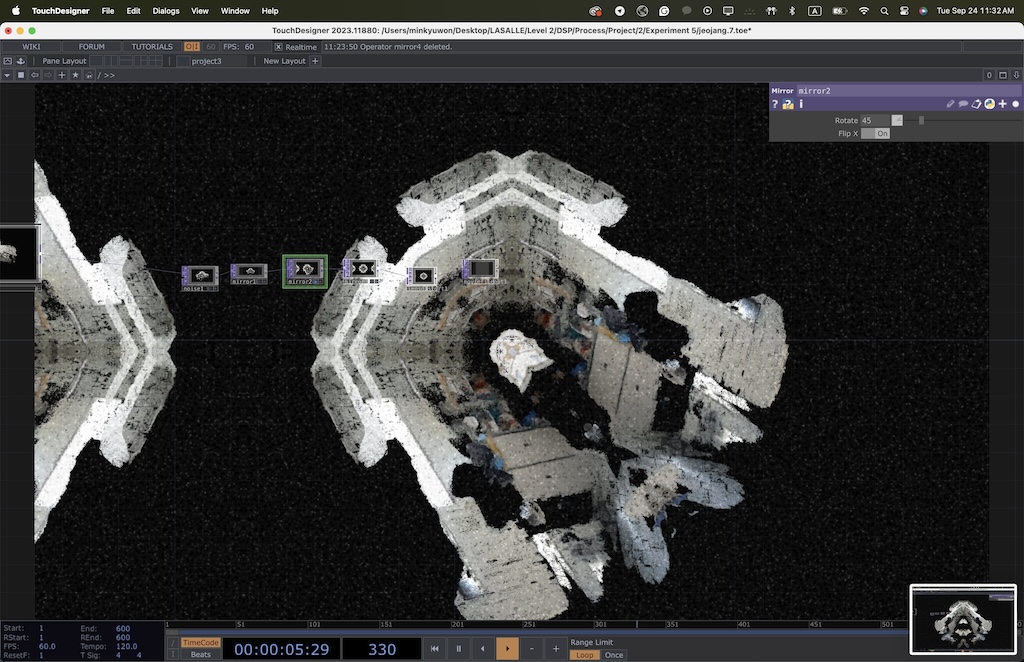

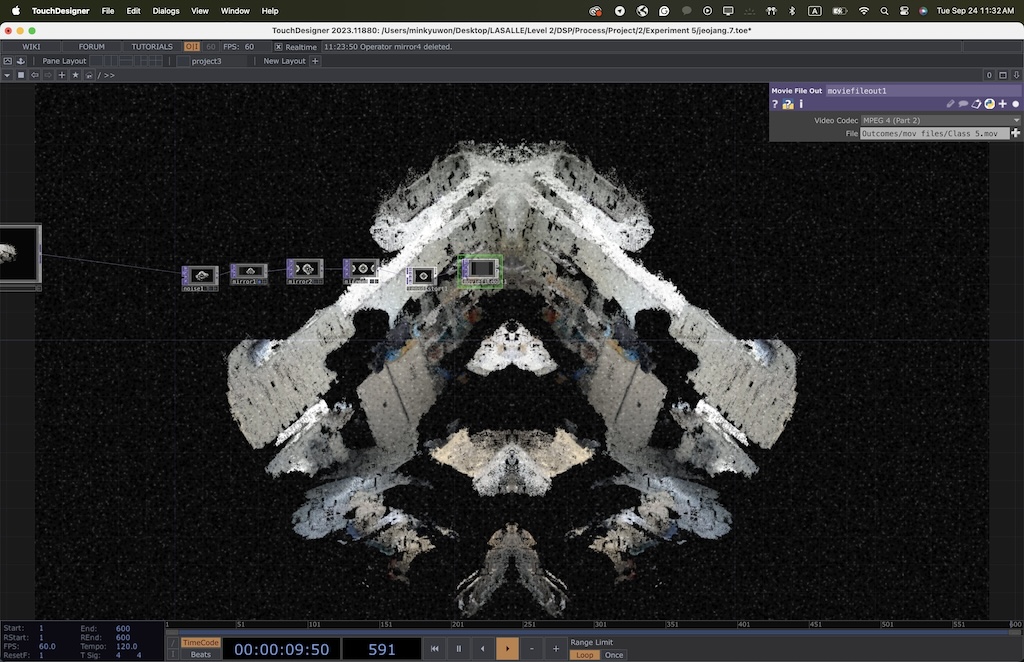

- TouchDesigner

* Link to Referred Tutorial - After scanning, we imported the models into TouchDesigner for processing. We applied transformations (e.g., distort, mirror, scatter) in response to specific voice commands. The abstract transformations mimicked the shifting, dreamlike qualities of controlling one's dream landscape.

-

LFO: Provides rhythmic oscillations that drive animations, mimicking the unpredictable flow of dreams.

Noise: Adds organic, chaotic textures, representing the unconscious mind.

Translate: Moves visuals, simulating movement through a dream space.

Keyboard In: Allows interaction, symbolizing control within a lucid dream.

Threshold: Sharpens and defines certain elements, contrasting between clarity and obscurity in dream states.

Mirror: Reflects elements, creating symmetry

and adding to the surreal quality.

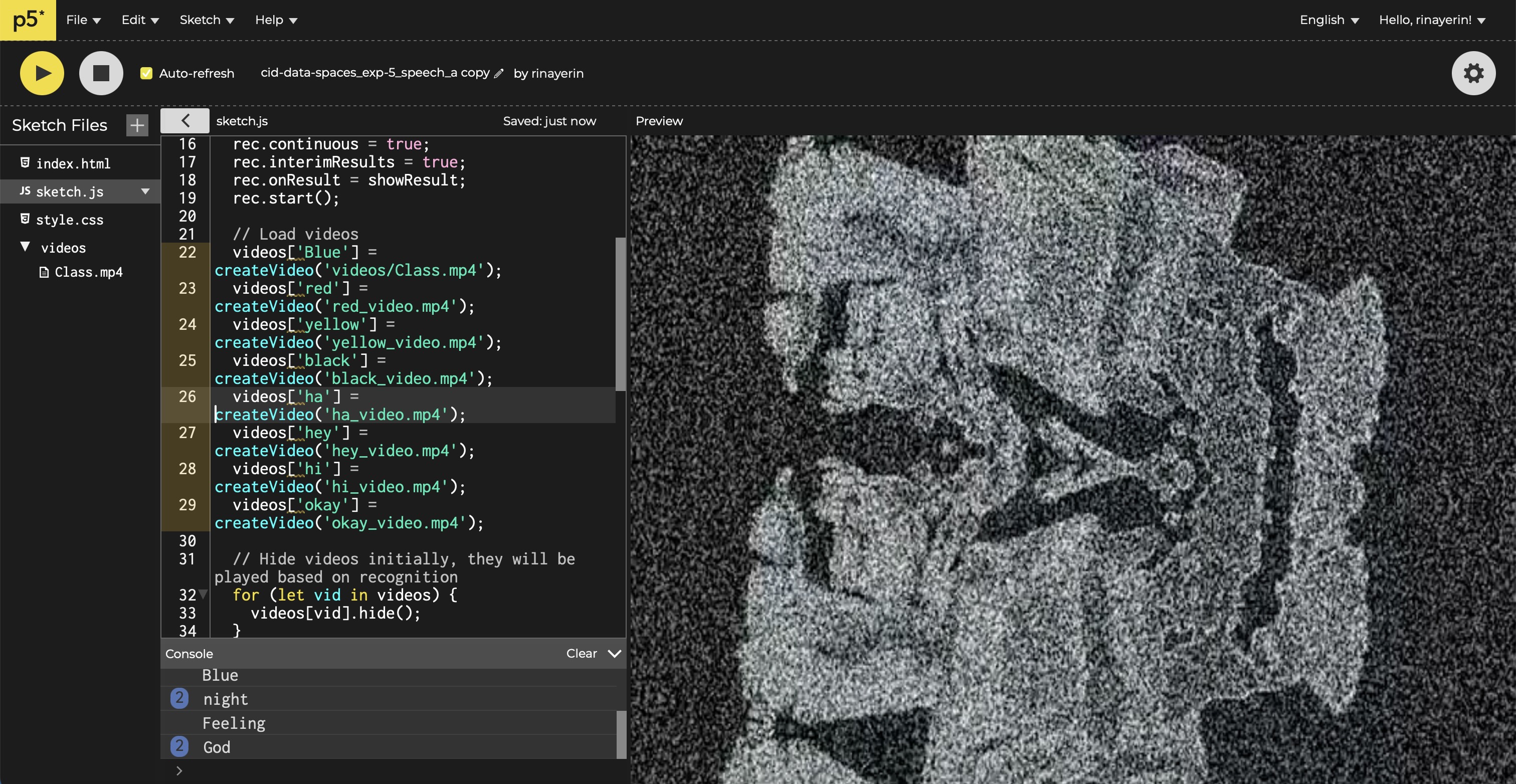

- p5.Js

(Speech Recognition)

* Link to the sketch - we used speech recognition to capture the user’s voice and trigger the corresponding visual changes. With p5.js we made visual responses played as videos that changed dynamically based on the spoken commands.

- Throughout the process, we faced challenges with video rendering. Initially, the video was displayed twice due to the simultaneous use of .show() and image(). After resolving this, we encountered another issue where the video froze, which we fixed by adding .loop() to ensure smooth playback. These challenges helped us better understand the handling of HTML elements and real-time updates in p5.js.

Studio_Noise -

Room_Default

Through this experiment, we learned to bridge multiple platforms (Polycam, TouchDesigner, and p5.js) to create an interactive visual experience. Integrating the 3D scans and speech recognition was a challenge, but each step refined our approach to translating voice data into a compelling visual narrative.

By blending the ideas of body rhythm and dream control, this experiment represents a person's journey through a lucid dream where reality shifts and bends based on subconscious cues. Using particles to form spaces and distort their structure allowed us to capture the feeling of shrinking into an abstract space — much like in Ant-Man's Quantum Realm— and transforming one's surroundings with a simple breath, pulse, or blink.